Cross-Modal Synchronization and Calibration

Infrared–RGB Stereo Depth Transfer

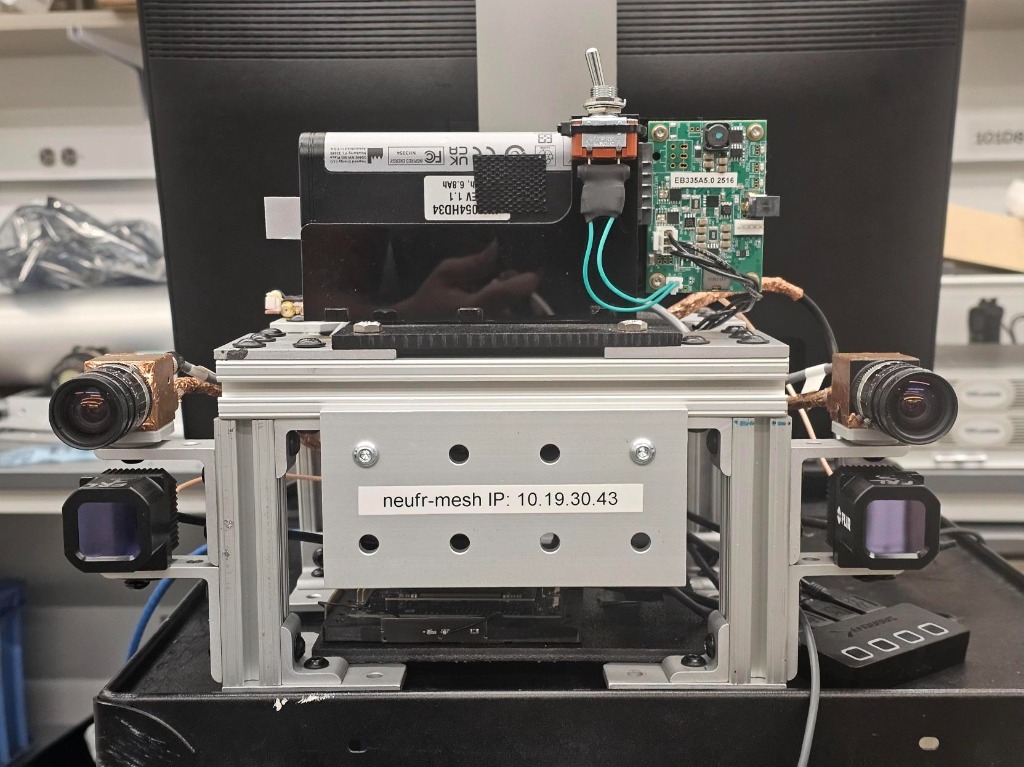

Front View: RGB & Thermal Stereo Pairs

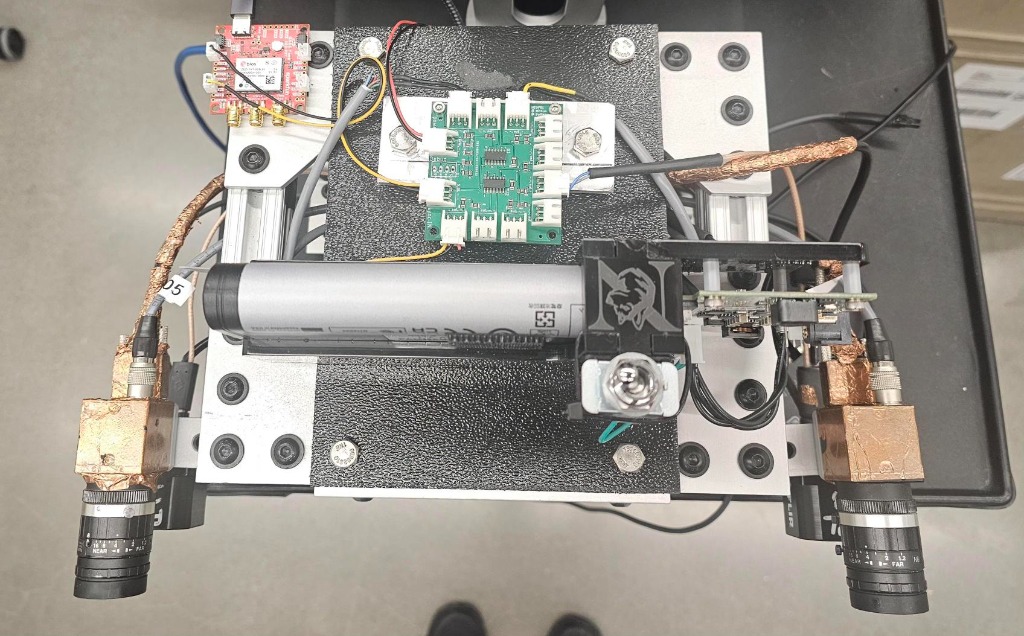

Top View: Synchronization Hardware

Cross-Modal Distillation

This project tackles the challenge of estimating depth from thermal imagery where ground-truth data

is scarce.

Strategy: We leverage the rich geometric feature representations of a pre-trained

RGB depth estimation model (the "Teacher") to supervise and train a lightweight Infrared stereo

network (the "Student").

Method: By transferring depth knowledge from the data-rich RGB domain, we enable

the IR pipeline to learn robust depth estimation without requiring massive annotated thermal

datasets.

Spatio-Temporal Infrastructure

To validate this distillation process, I designed and built a custom hardware-software pipeline that bridges the two modalities, ensuring valid data generation for training and evaluation.

- Temporal Alignment (Time Sync): Implemented hardware triggering to achieve microsecond-level synchronization between RGB and Infrared sensors, effectively eliminating motion artifacts in dynamic scenes.

- Spatial Alignment (Calibration): Developed a unified calibration framework to accurately estimate extrinsic transformations between the multimodal sensors, enabling precise reprojection and overlay of visual data.

Overlay Visualization

Synchronized overlay of RGB and Thermal streams demonstrating precise spatial and temporal alignment.